Can you recall the feeling when things go bad and start shattering, and then, when making a flashback, out of a sudden, you realize that the signs of an upcoming disaster have been there for weeks, if not months? How come have you missed them? If you have ever been in such a position, one of the reasons that got you there was a lack of analytics and measurement over your product. In short, either you relied on the wrong metrics, or you didn’t collect any.

In this article, we’ll discuss three types of metrics: technical, business and UX-related. Although they origin from different areas, all of them come down to one simple question - does your application meet your business requirements?

But before we get further, let’s start from where it all begins - the core definition.

How to use metrics?

What is a metric?

Shortly speaking, a metric is a measure of success. It can be (and is) used not only in software development but also in a number of other areas. Managers use metrics to:

- reduce overtime,

- increase return on investment,

- reduce losses,

- improve process efficiency,

- identify and eliminate product flaws.

But here’s a tricky thing with metrics. They’re like watermelons. Green on the surface, they might hide a big red bulb of trouble inside. Let’s say that despite the development pace, the customer churn is bigger than ever. Or, despite a high code coverage, adding simple and straightforward features takes weeks and gives your development team a solid headache.

To avoid the watermelon effect, you need to approach metrics cool-headed. Business environment and product design are complex enough to say that apart from hard data collected in so-called quantitative measurements, we also have more “soft” metrics such as observations and opinions. Researchers call them qualitative measurements. While the first show success (or problems) at a scale, the latter denotes the context. In order not to overinvest, you need to analyze your app’s health from both angles.

What to measure in that case? In theory, we could measure everything, but we prefer to repeat after William Bruce Cameron, the author of “Informal Sociology: A Casual Introduction to Sociological Thinking”:

Not everything that counts can be counted, and not everything that can be counted counts.

Moving the quote to the application domain, we could say that as much as we need measurements and metrics to rely on, what really matters are your business needs. After all, they drive software modernization.

Business objectives come first

When looking for red lights, you need to seek deeper than the app itself. The quality of software impacts your entire organization. Maintaining a system that needs modernization generates considerable costs. Due to the longer time-to-market and unpredictable behavior of components, your bills would bloat. Not to mention the development team, tired of patching and fixing unmaintainable and outdated software.

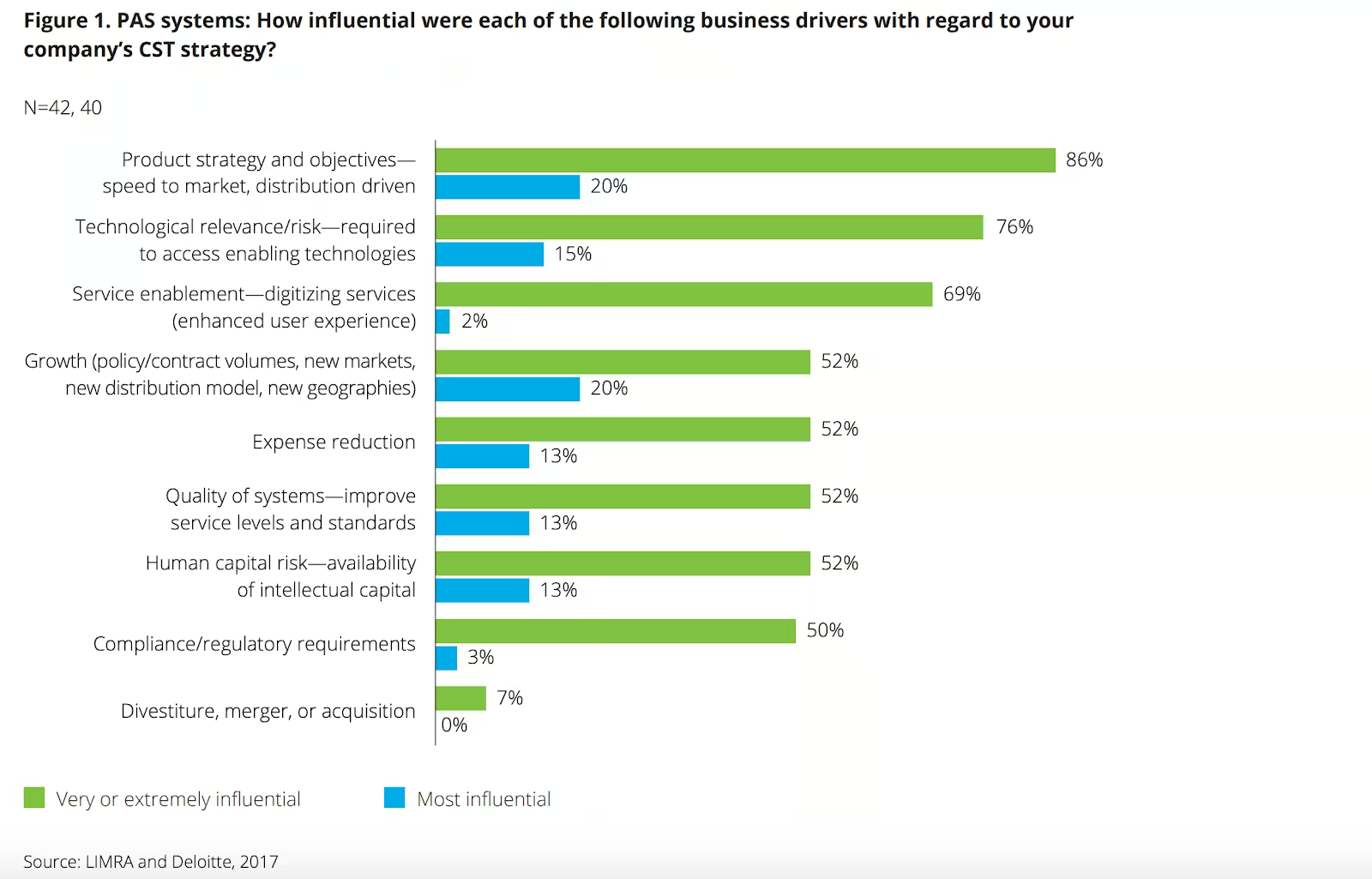

Software modernization reaches further than the product itself. Since it often touches information exchange, data analysis, or supply chain, you might want to look at it as an opportunity to improve your internal processes in terms of productivity and financial efficiency. Deloitte’s Legacy system and modernization report from 2017 clearly showed that the most influential factor in favor of the decision to modernize was to improve time-to-market. Interestingly, the access to prospective technologies and the need to polish user experience placed second and third accordingly.

How to track the health of your application?

If talking about your internal application, it probably slows your employees down because each action requires inside knowledge and takes ages to complete.

On the other hand, if the application brings you revenue from users’ purchases or subscriptions, you might experience drops in customer lifetime value (LTV) or loyalty.

How to know whether we should consider them red or orange lights? That’s where metrics come in handy. Of course, life would be too easy if we could simply take metrics from one project and move it to another. Instead, each application has its unique business strategy, surrounding, and purpose. Therefore, it affects not only the metrics we collect and track but also their thresholds.

Software metrics - the quality dimension

One of the most straightforward signs that your application needs modernization is the quality of the solution. From the technological perspective, we can measure quality with the percentage of successful testing rounds, however, it’s worth notice that application testing takes place on different levels, each with a different objective.

In the production phase, when we’re building the application from scratch, a set of unit, integration, and system testing is supposed to check and detect inconsistencies regarding our initial requirements. Why didn’t we say that tests detect “any inconsistency and malfunction of software”? That’s how the ideal situation would look. In the real world, when dealing with limited budgets or strict timelines, you’re not able to cover all possible test scenarios on all possible web and mobile devices under all possible operating systems.

Let us show you what you can measure to judge whether you can sleep knowing your app’s quality is kept.

Code coverage

If you ask how many lines of code (LOC) should be covered in unit tests, an immediate answer would be 100%. But when you look at contemporary applications, you’ll see that only a handful come close to 50%. Here’s why.

Quality assurance cost. It’s not only about writing scenarios, running end to end tests, or automation. Every change to the existing code base means changes to testing scenarios, additional regression, or performance tests. Keep in mind that just like code, automatic tests also need maintenance. At Merixstudio, we use, e.g. API automation to keep test maintenance costs as low as possible.

Instead of focusing on the coverage of the entire system, sometimes it makes more sense to cover key modules and critical paths. If yours are not covered, it’s high time to modernize your system.

Now, getting to the nitty-gritty, is there a clear number that indicates that the coverage is sufficient? Unfortunately not. Why is it so? Because the devil lies in the details. You can test whatever you want in your code (even whether a notification’s text is a string). The question is… would such a test give you any relevant information? Maybe it would make much more sense to check whether the notification appears on the right page and under expected conditions?

Service availability

Looking at the quality audits we've made, we can say that performance (or rather its lack) is the most frequent factor clients come to us with. Having built their application years earlier, or in a hurry that compromised quality, they realize that at a certain point, the app can’t run efficiently anymore. What does it mean? Looking at the surface, it means that pages load too long or crash. But that’s the result, not the issue itself.

Most performance issues are related to inadequate project requirements. What seemed reasonable and sufficient when we released our application for the first time might not be enough when it was time to scale. In the rapid growth, business rarely finds time to decide on rebuilding and re-assessing the existing infrastructure or architecture.

The result is that we’re building “for today”, not “for tomorrow”. So when tomorrow comes, our systems underperform.

How can you track your app’s performance in numbers?

- Track uptime percentage.

- Measure the time between failures and mean time to recover.

- Follow bug fixing. If your team needs days to fix a simple bug, it is likely due to complex information architecture or messy code.

Number of bugs

Easy as it sounds, the number of bugs is the simplest possible metric you could track. In order to have a reference, you can compare the number of bugs between delivery cycles or sprints. You can also measure the number of bugs coming from regression testing. However, one thing to keep in mind when relying on this metric is that bugs were not made equal. What you should be looking for is keeping the metric lower with every sprint.

Defect Removal Efficiency

In short, that’s how efficiently your team deals with bugs discovered prior to release. Let’s say we discovered 10 bugs in a sprint. If developers fixed 8 of them before the release, their defect removal efficiency (DRE) would account for 80%. If your team is dealing with dropping DRE, beware. That’s where a warning light should shine forth. The chances are that without modernization, you’ll quickly get stuck with unresolved issues piling with every development cycle.

When do developers know it’s time for software modernization?

Among other quality metrics mentioned above, there is one we consider queen of queens. It’s called maintainability and shows whether the software team is able to react to changing business requirements without the need to rewrite the entire application.

Applications with high maintainability are easy to grow with new features, third-party integrations. On the contrary, low maintainability shows the system’s vulnerability to very long and inefficient development cycles, security threats, or unexpected malfunctions.

In order to assess the health of the codebase, apart from code coverage, developers track several metrics that help them point out rotting areas that need refactoring.

Outdated libraries and security threats

When it comes to code, old school is not exactly what one would be looking for. Outdated versions of libraries are a serious threat to your application’s maintainability. Seriously. It’s like with your computer software. Are you still running on Windows Vista? Probably not.

Still, according to 0patch Survey Report, 58% of organizations run on platforms unsupported with patches. What makes them stick to the old solutions? The cost of replacing the old school with the new tech or concern that the patches would “break stuff”.

If you don’t want to put yourself in such an uncomfortable position, you need to track the versions of libraries used in your system and systematically patch them against security vulnerabilities. An out-of-date codebase is prone to security breaches in times when its inherent safety loopholes have not been patched and have become well known to the public.

Application complexity

Expressed by the number of decision points and their dependencies, application complexity shows how easy (or difficult) it is to check the proper behavior of our system. To calculate complexity, developers use tools that draw flow graphs and then count the number of independent paths.

One of the most popular methods used in software development is Mccabe’s cyclomatic complexity.

Applications with higher complexity numbers tend to have a messy structure, sub-optimal technology solutions, and multiple application elements dependent on one another. You can easily deduct that the complexity stands directly in proportion to low maintainability.

Code smells

Beside bugs and errors that show your software malfunctions, developers look for violations to fundamentals of software development, often called code smells. This metric might have a funny name, but the consequences it brings are not funny at all.

What can smell inside your code? Anything that is not clean, understandable, and justified. Inconsistent naming, duplicated code, or message chains are only a handful of examples.

Interestingly, a smelly code can execute and work in line with the business requirements. However, the smellier it gets, the more difficult it is to develop new functionalities and update the existing ones. With time, code smells increase the risk of bugs and failures.

The best way to avoid code smells is to follow the patterns of clean code and make peer or automatic code reviews a part of your software development routine.

A solid pull request quality process can mitigate the risk of a smelly code no one wants to sniff.

Time to make a change

It’s hard to imagine that after the first release, your software would be left untouched. Therefore, you will be faced with the need to extend your specification with new features, or even make changes to the existing ones. Now, if you keep track of the time your team needs to complete such tasks, it can become one of the key indicators of a growing software maintainability problem.

If making changes becomes an unpredictable process that generates bugs and makes developers spend days on troubleshooting, a likely alternative is to write the application from scratch.

User Experience perspective on metrics for software modernization

How not to overlook the moment when there’s still time to react and save your meticulously developed system from becoming a relic of the past IT trends? Besides quality and development, there is one more metric group you should never neglect - the information from your users.

Of course, as in already mentioned areas, the diversity of signs your app needs modernization is enormous, starting from the number of users, time spent in the application to your market value. As much as their drop shows that things go wrong, it doesn’t necessarily mean your code is the reason.

It’s almost impossible to measure and analyse every metric that gets in our hands. Therefore, let us present three indicators that will allow you to add the user’s perspective to the equation.

Complaints

One of the signs you need to modernize either your processes or your product is high customer churn, dropping traffic, and negative customer feedback.

Our findings show that people tend to quit using an application for several reasons, including:

- performance failures and glitches,

- incompatibility with popular services (e.g. social media) and systems,

- outdated tech and functionalities (e.g. Flash or Microsoft Silverlight),

- outdated aesthetics of the interface and design.

The good news is that having a service desk, you track the number of tickets, analyse them by type, and monitor the most frequent complaints, don’t you? If not, you should start doing it right away. Statistics from your customer success team are strictly connected with your application’s usability, no matter if we’re talking about a customer-faced app, or an internal IT system. Enriched by conclusions from a UX audit, complaints analysis will help you assess whether the improvements you need are manageable in view of the condition of your software.

Lost time

Now, we’re getting to a more eye-opening metric. Time. As much as insights from customer service apply to customer apps, lost time tends to characterize enterprise legacy systems. When completing simple activities requires too much time and effort on the users’ part, it often leads to users devising a workaround. As a result, instead of helping to move things forward, the system makes operations more complex, longer, and not intuitive at all.

Not a big deal? Not necessarily. Let’s go back to our customer service center. If employees need to jump between application views or use another external source of information to resolve a task, the time to solve is probably twice or three times longer than expected. That’s a warning sign that your company is losing time (and therefore money). If fixed, you could close more tickets, process more sales, or make bigger revenue.

Maintenance cost

Let’s assume that you decided to re-model your process so that your customer service can solve issues in 50% of the time they previously needed. You can easily calculate how much money your business could save. Same with the budget, you need to implement the changes in the existing code, isn’t it?

Having read about the quality and development metrics, you already know it’s not that simple. Growing development time, difficulties in finding developers for a legacy project, a complexity that makes one thing break another - all of these factors make it extremely difficult to predict. Still, if your team is dealing with the said obstacles, you can be sure your maintenance cost will never drop. Long-term, it might be less costly to build a new solution than keep the old one alive.

How to stay calm when everything burns?

To keep a sane mind, you need to rely not on hunches, but on data. According to Gartner’s study, CIOs agree that a reasonable metric ratio gives 56% of data related to business outcomes and 44% to software metrics.

Such results show that business requirements and constraints always come before technology. It’s the bottom line criteria for every software project. Whatever metrics you decide to track, they need to show whether the cost of development exceeds the value to the business. Or maybe it’s the other way round?

Quality and development metrics show clearly where businesses should invest money in. As long as the costs of development are lower than those of maintenance, modernization seems the right way to go. Thanks to faster time-to-market, easier recruitment and onboarding process, and a chance to improve information flows, modernization can give you leverage, especially in a dynamic and competitive environment.

Looking for more insights on upgrading legacy software? Check our handpicked modernization content!

.svg)

.svg)

.svg)

.avif)

.avif)

.avif)

.avif)